Where Facts End and Story Begins

The other day I wrote an article about how I had used five different AI models (ChatGPT, Claude, Gemini, DeepSeek, and Qwen3-Max) to perform a bit of an experiment. I asked them to take a random news article that I gave them and pull out the human story leaving only facts behind. The results were fascinating.

All of the models had a different version of what fact meant. Many of the models left in words like “angry”, “frustrated”, and “upset” as though human feelings were fact. You can read the full article here. It led me to an interesting discovery about not only my framework, but also human perception in general.

I determined there were three distinct layers to human perception and experience. They are:

Layer 1 - The structural reality of what is before language, interpretation, awareness, or human observation occurs. It does include automatic biological responses such as fight or flight, increased heart rate, or cortisol spikes.

Layer 2 - The functional interpretation of what happened. “The ball bounced. The door closed. The person spoke.” It’s the minimum level of language required to describe what’s happening, with as little interpretation as possible while still using language to describe events.

Layer 3 - The human interpretation layer. Instead of saying “The door closed.” which would be a layer 2 explanation we say, “They slammed the door.” The word slammed describes how the door closed, making it an interpretation instead of just stating the fact that the door was closed.

AI is generally programmed to work within the third layer. It assumes the human interpretation to be correct and therefore, when I ask it to take out human story without clarifying what I mean, it leaves in human feelings.

Human feelings are not fact. They arise from biological activation, but the meaning we attach to them belongs to Layer 3. They are not structural reality independent of interpretation.

While the AI finding was definitely intriguing, what peaked my interest was how language affects our response to what’s happening around us.

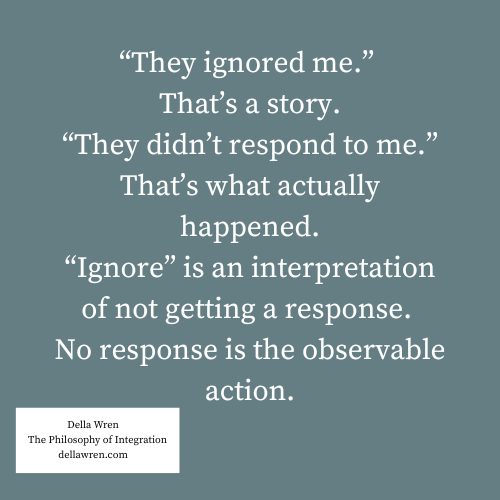

If somebody doesn’t respond to you, are they ignoring you or did they simply not respond? Notice the difference the interpretation makes to how you feel about what happened.

When you don’t tell the story about being ignored, the person not responding to you doesn’t matter as much. No response doesn’t generate the same feeling or need to defend yourself that being ignored does. It allows the experience to just be what it is at a layer 1 or 2 level without needing to do anything about it.

The language doesn’t seem like it should be that important especially when it’s just in our heads. But we frequently say things like “words matter”. If we believe that words matter then how we narrate the experience in our heads also matters.

In terms of the framework, we’re narrating the cause which has an internal effect on us, both mentally and emotionally. If the narration in your head changes how you respond externally, then you’re no longer responding to the observable event (layer 1 or 2), you’re responding to your interpretation of that reality, which is layer 3. Layer 3 is a distorted or modified view of the first 2 layers.

The goal is not to do away with language or stop using words like slammed or yelled. The goal is to recognize how those words shape your perception of reality before you react based on that interpretation.

The framework lives in layer 2, which is the functional level of description needed to communicate and be understood. What the framework indirectly does is encourage us to pay closer attention to the narration before responding to observable events. Notice the difference between the two things.

It is the gap between the event (layer 1) and narration (layer 3) that causes distortion, pain and unexpected outcomes. The middle ground, layer 2, is where can learn to work with reality directly, remove some of that narration, and make better choices about how to respond to our experience.

This article is part of the AI as Structured Thinking series.

You can explore the full sequence here: https://substack.dellawren.com/t/ai-as-structured-thinking