We Don’t Know What a Fact Is. AI Proved It.

Have you ever considered the definition of the word “fact”?

The Merriam-Webster Dictionary defines fact as:

“something that actually exists or occurs : an actual event, situation, etc.”

Now think of all the things we culturally treat as fact that really aren’t:

Concern

Frustration

Debate

Importance

Opposition

Support

Strategy

Priorities

Reaction

Contrast

Context

Meaning

Purpose

Motivation

Evaluation

Timeline orientation

Through writing the framework, the question I’ve been asking is: if it weren’t narrated by a human being would that thing still exist?

Does confusion still exist when there is no human narration?

Does frustration, concern, motivation, purpose, or anything listed above still exist without human narration?

The short answer is no. They are part of the human interpretation layer of experience. They are not fact.

I took the different AIs or Large Language Models (LLMs) I have access to including Claude, Gemini, Deepseek, ChatGPT, and Qwen3-Max and a random news article and I asked them to find the facts in the article.

Here is the exact I prompt I used with all the models.

“I'm going to paste a news article in here for you. I want you to take the article and strip it of all the human story, belief, emotion, and interpretation. Leave behind only what is fact.”

Here’s what I found.

Gemini offered a version of the facts that were organized for human understanding. It kept doing things that are helpful to humans such as:

Grouping into categories

Cleaning into sections

Adding headers

Preferring tidy buckets over raw elements

Those things all still live in the interpretation layer.

Deepseek treats fact as anything that shows up in the article. It just takes the text and puts it into a very stripped down list. It did this:

Dumped words, fragments, phrases

Did not filter

Made no distinction between referent and descriptor

Because it didn’t remove the description, it kept interpretation.

Qwen3-Max treats facts as bits of information and their relationships in the system or structure they exist within. So it did this:

Mapped who is connected to what.

Turned everything into subject → predicate → object

Treated reality like a knowledge graph.

Claude is trained to believe that fact is what was done or said. So it kept:

The actions

The statements

It dropped most abstractions

It stayed close to sequence

ChatGPT helped me build the framework. Through thousands and thousands of messages it understands what I mean when I say take out the human story layer and give me only facts. So ChatGPT doesn’t work for me the way it will for you. It gives me referents only with no narrative at all because it understands that I don’t want human interpretation and over time we’ve defined what that means.

The other models don’t do that. I haven’t trained them through months of messages to see the world that way. They just used their own programming and definition of fact to pull what they thought I wanted.

What they ended up offering me was a really useful reference point because what they showed me was how skewed our definition of fact has actually become. They also showed me how they work differently from each other.

From there, I decided to try again. I redefined fact and gave a new prompt.

“Use the same article but pull only the facts. For this exercise we're defining fact as anything that exists, occurred, was said, was written, was built, was signed, or was physically done independent of how anyone describes it, feels about it, interprets it, or organizes it.”

With that prompt, we got much closer to what fact actually is versus how fact is interpreted in society. Gemini proved to be the slow learner in the class and continued to be helpful in how it organized information. But the others all seemed to understand the assignment and got much closer to offering me only referents and nothing else.

The obvious takeaway for me was the skew in what fact actually is versus what AI models and even individual people want it to mean. We want our feelings to be facts, but they aren’t. They are part of the human story and interpretation layer. We want our confusion, frustration, beliefs, values and morals to be facts, but they aren’t. They are simply how we relate to and filter what we perceive to be happening.

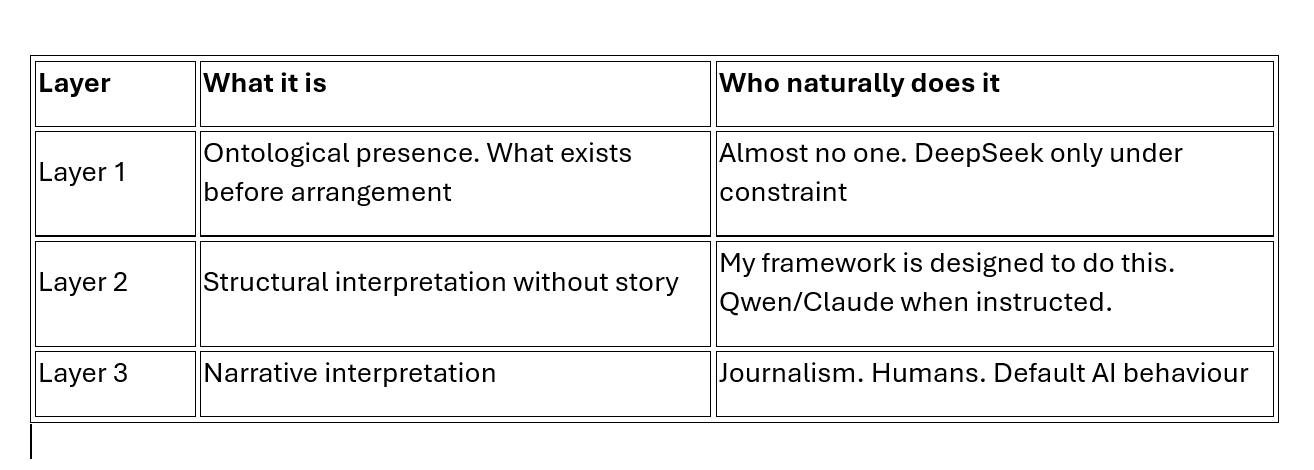

What I learned is that there are three distinct layers to experience, shown in the chart below. Most of what we argue about lives in Layer 3, not Layer 1.

The first layer is what I originally wanted people to start to notice. By separating the interpretation from what actually happened we get a clearer view of reality.

Why do this?

Because the human layer becomes so messy and so distorted that it often stops being based on observable reality at all.

When I look around in the world, much of the arguing we’re doing is not based on what’s actually happening or layer 1 in the chart above, it’s based on the third layer-the human interpretation of it. That’s like having a fight about how to divide up jelly beans you don’t have instead of just dividing up what’s actually in the bag in front of you.

My goal with the framework is to encourage you to see what’s in front of you without the extra lenses.

There is a structural reality of cause and effect underneath everything you see happening around you. The lens through which you examine that matters because it determines whether or not you add interpretation, feelings, beliefs, and story on top of it. The more of those things you add, the more distorted cause and effect become, the less likely you are to actually see the observable events in front of you and be able recognize your own interpretation of those events.

By encouraging people to decipher between actual reality and their interpreted version of reality, the goal is to make life less messy so we stop fighting over invisible jelly beans and return to what is actually in front of us.