Causality vs Correlation in AI Outputs

Have you ever asked AI, “Will my job be replaced in the next 12 months?”

It’s a fascinating question, not because the model can see the future, but because of what the structure of that question forces it to do.

Large Language Models generate language patterns. They don’t reason about labour markets in the way economists do. They predict the most probable next sequence of words given the shape of the prompt.

A binary, identity-level question with a short time horizon tends to produce a reassuring verdict. Not because the model cares or detects fear, but because that framing statistically aligns with a common narrative: AI replaces people, not tasks.

What looks like personalized insight is often just pattern density responding to prompt geometry. When you build consistent interaction patterns with a model, it reduces generic defaults and increases structural alignment. But in this case, even without history, changing the structure of the question changed the class of output.

Let’s reframe the question.

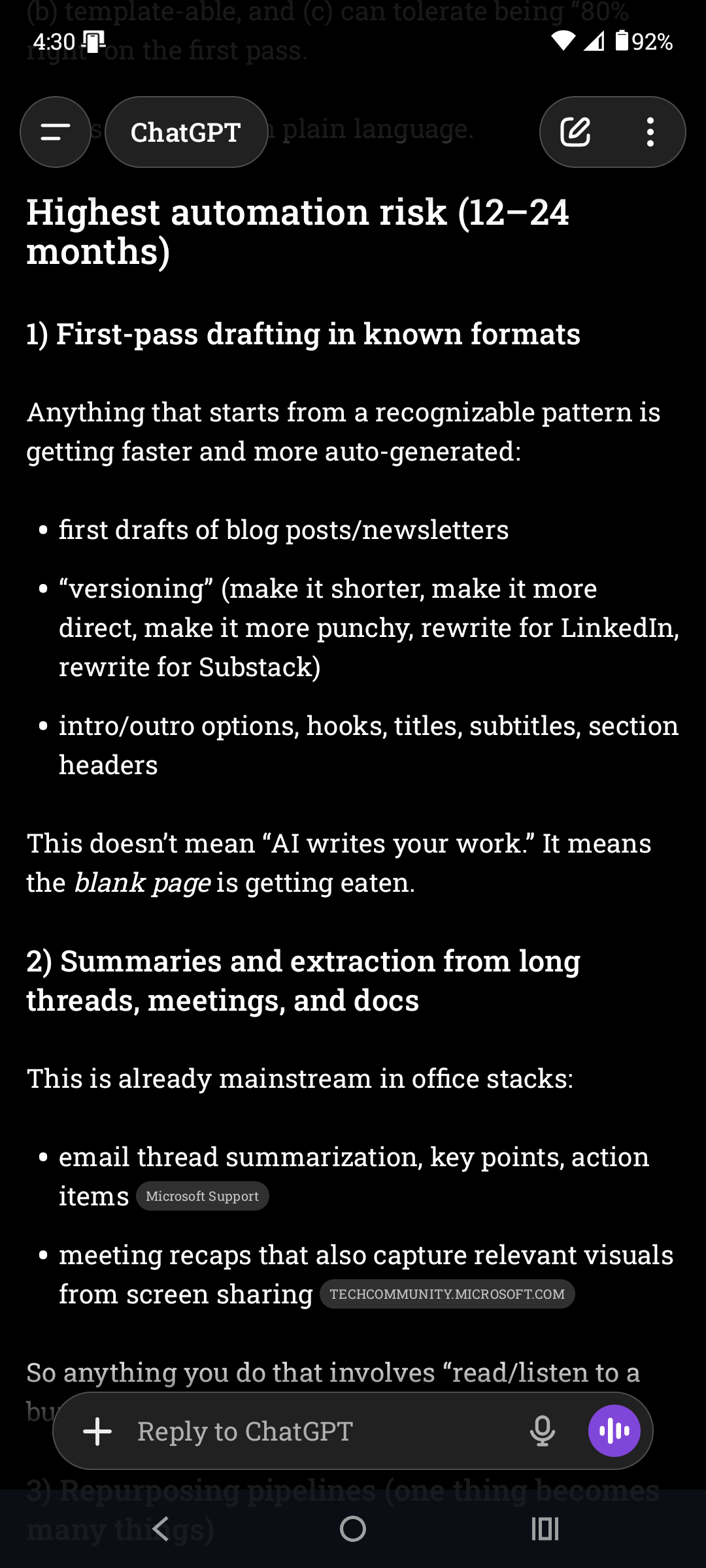

Given current AI capabilities and market trends, what functions in my role are most likely to be automated in the next 12–24 months?

The prompt introduces mechanism and references adoption trends. It extends the time horizon and removes the short-answer constraint. When I asked this in a new chat, I didn’t get reassurance, I got decomposition. What follows in the screenshot below is a small excerpt.

The difference between the two prompts is in how much causal analysis and constraint is provided.

The first prompt does several things at once:

It offers binary framing: replace / not replace.

It includes a short-answer constraint, which forces compression and prevents decomposition.

It adds identity-level framing. “Replace me” treats the role as a whole entity.

The time window is short. Twelve months makes full replacement statistically unlikely in most professional contexts.

Given that structure, the safest, highest-probability pattern the model has seen across training data is something like:

AI automates tasks.

AI doesn’t replace complex professionals.

The real risk is someone using AI better than you.

The answer the model gave is pattern-consistent. It aligns with common discourse. It isn’t necessarily wrong, but it is correlation-shaped. It reflects narrative density, not mechanism analysis.

If you want something closer to causal analysis, you have to constrain the AI differently. That means being specific about what you’re asking it to evaluate.

The second prompt removed the emotional and identity framing. It expanded the time horizon and eliminated the binary constraint.

That changed everything.

It moved from identity to function.

It introduced market trends, which forces consideration of adoption mechanisms.

It expanded the timeline to 12–24 months.

It implied ranking and likelihood.

It demanded decomposition.

The model now has to:

Break the role into tasks.

Compare those tasks to current tool capability.

Weigh adoption friction.

Produce probability gradients.

That is mechanism-shaped reasoning. It is still probabilistic and pattern-based, but structurally closer to causality.

The model defaults to correlation-shaped language because that’s how it is built. It predicts what typically comes next in discussions about a topic. If you want something closer to causal analysis, you have to explicitly prompt for mechanisms, constraints, and decomposition.

There is nothing inherently wrong with correlation-shaped language. It produces answers that sound coherent because they align with common narratives. For most people, “sounds right” is enough.

But if your complaint about AI is that it just agrees with you, that’s usually a signal that you’re receiving high-probability narrative output rather than structured analysis. The fix is to ask better questions.

Be specific. Ask it to break things apart, include mechanisms and constraints. Ask what would have to be true for the answer to change. If you want the model to move beyond correlation, you have to move the prompt beyond story.

From a very human perspective, I understand why it feels like you should be able to sit down and talk to AI the way you would talk to another person and receive a causal response. Conversation is how we test reasoning with each other. We assume that if someone sounds coherent, they’ve thought something through. But that isn’t what’s happening here.

The model is not reasoning from lived experience or subject-matter comprehension. It is mapping your language to patterns it has seen before and generating the most probable continuation of that pattern. That can produce structure and simulate mechanism analysis. But it is not the same as understanding. Fluency feels like comprehension, but it isn’t.

Correlation-shaped AI output is built from what usually appears together in language. Correlation output feels right because it mirrors dominant narratives and familiar explanations.

Correlation says:

“These ideas commonly co-occur.”

Causation-shaped AI output is structured around what produces what, through what mechanism, under what constraints. Causal-style output requires decomposition. It has to break the situation into parts, identify directionality, surface constraints, and explain what would change the outcome.

Causation says:

“This leads to that because this mechanism operates under these conditions.”

The model can do both in form. But correlation is the default because that’s how next-token prediction works. Causation only shows up when the prompt forces structure.

If I said “peanut butter and…”, what would you say next?

Peanut butter and banana or peanut butter and jelly are likely answers. AI doesn’t understand sandwiches, but it can look up the most likely response and fill in the blank. That’s what next-token prediction is. Here’s the basic loop that occurs every time you interact with AI:

You write a prompt.

The model looks at all the tokens in that prompt.

It calculates which token is most likely to come next based on patterns learned from massive amounts of text.

It picks one.

Then it repeats the process using the growing sentence as new input.

Because the AI has seen billions of examples of how humans connect ideas, the output can look thoughtful, structured, even causal. But underneath, it is selecting the statistically most plausible continuation at each step.

When the prompt is vague or emotional, it predicts the kind of language that usually follows vague or emotional questions.

When the prompt asks for mechanisms, constraints, and ranked likelihoods, it predicts the kind of structured analytical language that usually follows those kinds of instructions.

AI is not smart in the way humans are smart. AI is predictive based on pattern recognition of billions of examples. When we understand that we can learn to use it better.

It has defaults.

It has built in safety mechanisms.

It will use pattern history with a given user.

But generally it is just predicting what you want it to say based on the prompt you offer. It sounds like human conversation, but it’s not.

Learning how to use it better means learning how to prompt and understanding what you get back when you do write a prompt. It doesn’t mean build a prompt library. But it does mean be aware of how the words you use will affect the output of the LLM. The best way to figure that out is just try it.

If AI feels like it agrees with you too easily, it’s not because it understands you. It’s because you asked it a question that invites agreement-shaped language. Change the question. Break the problem apart. Ask what would have to be true. Ask what would make the answer wrong. If you want better answers, build better structure. The model will follow.

You can explore all the articles in the series so far here: https://substack.dellawren.com/t/ai-as-structured-thinking.

My framework helps me with relational context in AI. It can help you too.

https://dellawren.com/downloads/using-the-philosophy-of-integration-with-chatgpt/